Maybe? Sometimes? But not across the board, at least not for me…

Over the course of the past two years, the idea of being able to easily create consistent characters across multiple images using generative AI has become somewhat of a holy grail. Every couple of months during this time, one or more videos have appeared in my YouTube feed, claiming this feat is “finally possible,” usually detailing an incredibly complex and often rather janky workflow via which to achieve it (incorporating multiple/specific AI engines, Google colab/Discord scripts, and/or much, much more). Although I’ve been mostly content coming up with new faces (and/or blending existing faces, which also inevitably generates new ones), I have engaged in my own experiments with these purportedly consistent character workflows more than once, but the intensity of the labor required versus the generally middling quality of the results has yet to provide any solution I’ve been eager to repeat ad nauseum.

Meanwhile, it’s clear that for some, this feat is already definitely possible. You can’t really have such a thing as a virtual influencer if it’s impossible to keep their face recognizable, constantly morphing from one “photo” to another. So, then, why haven’t I been able to master it yet?

From early in their evolution, generative AIs have allowed for a combination of text and/or image prompting, usually along with the ability to “weight” the relative influence of the text versus the uploaded image(s). For increased precision/granularity, it’s also possible to assign different weights to different words in your text prompts, as well as to choose between multiple types of data that can be extracted from existing images. Earlier this month, Midjourney announced a new “character reference” feature, implemented as a third option to specify how an image prompt will influence a new image generation (in addition to the existing “style reference” and “image reference” options, which I’m not going to get into any deeper here, so if you want more background on what those are / how those work, try this).

This is deservedly big news for those of us who care at all about this shit, as Midjourney is arguably the most consistent engine out there in terms of generating high quality, photorealistic output. However, in my limited experimentation with character references thus far, it seems like yet another claim that is not quite true, at least not for the characters I’ve been trying to reproduce.

Admittedly, these are my first impressions, after only a short period of initial experimentation. And I suspect that my obsession with photorealistic results may be a significant part of the problem. Perhaps if I stylized my characters in a more fantastical, illustrative, or comic book manner, I might not be so consistently disappointed in the results. But I’ve also tried the same thing in another service, Rendernet, which did a better job than Midjourney on some characters and a worse job on others, despite both working with the same reference images…

The issue remains: sure, the new faces commonly look CLOSE to the original, often close enough to seem related to the original character, but the vast majority of them still don’t look enough like the same person for my satisfaction, even taking possible differences in makeup and lighting into account. And since no one generally even understands what the hell I’m talking about when I start to complain about this stuff offline, I figured it might help to throw some example images up here for other people to review, if only to confirm the assessments I’ve already made.

Hodja (Please, Show Me How You Do It)

Since I began regularly generating and posting imaginary girls, their accompanying captions transformed into more substantial story blurbs, which have grown lengthier and increasingly complex over time. It seemed natural to me to start to tie different girls’ stories together, to place all of them, if not in a single universe, then a shared multiverse (yeah, I know, the multiverse fatigue is already settling in, but bear with me). But without being able to create new images of the same girl or girls, I could only develop any individual’s story so far (i.e., not very far at all).

The somewhat strange, possibly disturbing video you see floating a few paragraphs above this one depicts the inhumantouch character Inka, run through one of the aforementioned janky processes in an attempt to generate additional images of her original face. The movement of her face was sourced from the movement of my own head, recorded selfie-wise on my iPhone, then face-replaced using a “thin plate spline motion model” script written in Python, the intricacies of which are beside the point, but the failings of which are perfectly visible in the video, whenever my/her head turns a bit too far in any direction.

Inka’s story blurb implies that the next step in her fictional journey will be to seek out another one of the inhumantouch girls, named Hodja (yes, after the Todd Rundgren song… the names have to come from somewhere). Some time ago, I did manage to achieve some degree of success in my attempt to create further images of Inka, after training an Astria/Dreambooth model on (further manipulated) still frames extracted from the above video.

As previously mentioned, it took a lot of work to get these results (an amount of work I would readily describe as “inordinate,” even before you start multiplying it by the number of girls already in the canon), and even though they are much smaller than the pixel resolutions I currently prefer to work with, as well as fairly limited in scope (offering only minimal variation from the original’s look and only a small spectrum of possible expressions and poses), they could still be used (along with the other images I used to train the model that made them) to train a newer model capable of higher resolution image output, or as character reference images for Midjourney or other engines… Which is just to say that all that hard work was not a total waste. No matter what technique I choose to pursue next, I would not be starting from scratch when it comes to Inka.

Even less than two years later, the totality of the grunt work required to repeat this process from the very beginning for another girl would be made much easier by Photoshop’s integrated generative AI tools, but they wouldn’t be able to help with the initial phase of the process: the awkward video face-replacement phase, which just didn’t succeed at all when I tried it with Hodja’s face (seemingly because she’s facing slightly downward rather than looking directly forward, or maybe because there’s less overall contrast in her face). The upshot being that unless I want to saddle myself with a great load of rather difficult potential Photoshopping, I’m going to need to pinpoint a method that allows me to generate both Inka and Hodja from the same engine, if not in the same exact image at the same time…

Here’s a detail of Hodja’s original image, which has now (for better or for worse) become my personal de facto benchmark for consistent character creation. Let’s see a sampling of what Midjourney v6 does with her, using this image as a character reference.

Well, these certainly aren’t bad images. Quality-wise, they’re undeniably a noticeable improvement on the original, in terms of convincing realism in both the figure and the background (which is not at all a surprise, considering the original Hodja was created with DALL-E 2 in late 2022, ages ago in AI time). And several of them do come rather close to looking like the original face, which is a highly commendable technological feat, no doubt, but… not one of them is close enough to feel like the same person (at least not to me). Ideally, I don’t want my characters’ identities to be dependent on remembering their particular hair colors (as particular as they may be). I don’t want to create a storyboard in which a different virtual “actress” takes over for the character in each subsequent frame where that character is featured in the shot. I know, I know, it’s a lot to ask, but if you’re going to claim you’re capable of “character consistency,” then dadgum, the character needs to be fucking consistent.

Let’s say I keep messing with this (which I almost certainly will anyway). What’s the best-case scenario? I have to re-roll a couple dozen more generations before I finally happen to produce one that looks like the same person to me. Then, yay, success, problem solved… Except that could mean every subsequent image will take the same amount of effort/re-rolling, if not more, to get another suitable match. Sure, poor me, having to hit a button so many times to conjure people from the ether, but that’s not the point. The point is that this is far from the first time I’ve tried to call attention to an AI that falls somewhat short of performing the magical feats of which its creators claim it is capable. I don’t mean to say I don’t think we’ll get there (and we probably will in short order, considering just how close these character-referenced images have already come), but could we, perhaps, try a little harder not to trip over our own hype on the way?

On the other hand, as I acknowledged earlier, it may just be too early in the game to pass such a harsh judgement. It’s possible that once I’ve pushed that button a few more dozen (or few more hundred) times, I will finally achieve the first successful duplicate face, and then by adding it next to the original face as a second character reference, I could increase the chances for further success in subsequent generations, reducing the amount of re-rolling required, gradually zeroing in on a more consistently consistent target. But we’ll have to go back to the drawing board (button-pushing board?) to see about that. For now, let’s see how Rendernet fared.

Rendernet, like Astria/Dreambooth, is one of many online instances of the major open-source generative AI engine Stable Diffusion. These results represent my first time using it (although I have previously used numerous other implementations of Stable Diffusion online). Once again, these images are hardly what you would call “bad” by any stretch. We’ve got just one vaguely wonky eyelid, but mostly everything else looks great, if not straight-up photoreal. The material rendering is highly tactile across the board (typical of SDXL models, Stable’s state of the art). A few small spots may need some finessing here and there (Photoshop’s remove tool and generative fill would make short work of them), but as default images right out of the oven go, there’s not much to complain about. The overall differences between some of the variations here are a result of using a smattering of differently tuned image models with the same prompt and character reference (which, on Rendernet, is referred to by the somewhat-more-BDSM-flavored feature name “FaceLock”). But, sadly, in terms of resembling the original Hodja, these faces just are nowhere near locked.

I could make (and have made) use of these kinds of “almost there” images in the past, by positing that if many of the “main” girls already live in alternate universes, images like these represent alternate versions of those girls in yet more universes, sharing the same first name, but differentiated/organized by largely arbitrary six-digit hexadecimal RGB color codes. If we’re going to have a multiverse in the first place, why not run with it, right? And that’s all well and good for just accumulating more postable content that still at least vaguely relates to a central potential storyline, but unfortunately, we’re still no closer to being able to progress that main story featuring our original characters (appearing consistently!) in their own universes.

Astraea

Astraea was the first official inhumantouch girl. Created via text prompt with DALL-E 2 in August of 2022, then facially finessed with a script called GFPGAN (originally designed to focus specifically on improving the appearance of faces during photo restoration), a combination of techniques I would continue to use in the early days, until I started to get annoyed with GFPGAN’s tendency to give every female face very similar makeup, and/or strip away what I thought were some necessary facets of character from a given face, in favor of a more sanitized, uniform, Sears-family-portrait kind of look. Still, I don’t mean to shit on GFPGAN too heavily, as without it, most of the original faces I made with DALL-E 2 would have remained firmly in the uncanny valley (if not qualified for straight-up analog horror), and I might never have continued my journey down the generative AI rabbit hole past this point.

Being the first, Astraea has frequently been a guinea pig for further experimentation. Here, I tried a face replacement method involving Discord, called Insight Face Swap, which took her facial features and pasted them over the faces in other real or generated images.

Well, these are mostly just… weird-looking, to be honest. I might be able to work with one or two of them, but most of the eyes seem rather fakakte, and I’d have to spend time with each to restore or correct them in some way, which is more than likely to make the swapped faces resemble the original even less than they already do.

Some people apparently swear by this method. But it seems that I’d be more likely to swear at it.

Let’s take the two best faceswaps above and the original image, and use them as a triple-strength character reference in Midjourney:

Err, yikes? How could using three character reference images produce results that look even less like the original? And who said anything about veering away from photorealism? Not my prompt, that’s for sure… Rendernet, you won’t let me “FaceLock” to more than one source image at a time, but come on, have you got any good news for me here?

Ok, perhaps it’s just in comparison to that last Midjourney misfire, but I’m starting to warm to the way that Rendernet “sees” things. Sure, a few of these are mis-stylized into some non-existent pseudo-Victorian era, the hair is all over the map, a couple of the foreheads are unusually massive, numerous liberties have been taken with the tip of her nose, and I’m not sure that any of them are a good enough match to be mistaken for the original, but at least these are back on the right track.

Rosalia

Maybe it’s just Hodja’s and Astraea’s faces in particular that are giving me so much trouble. After all, I was able to replicate Inka more effectively, even using that dodgy piecemeal method from years ago. Certainly, if I just keep trying different faces (and we’ve already got plenty to try), at least a few of them are just going to work, right?

Let’s make Rosalia the character reference in Midjourney and go again…

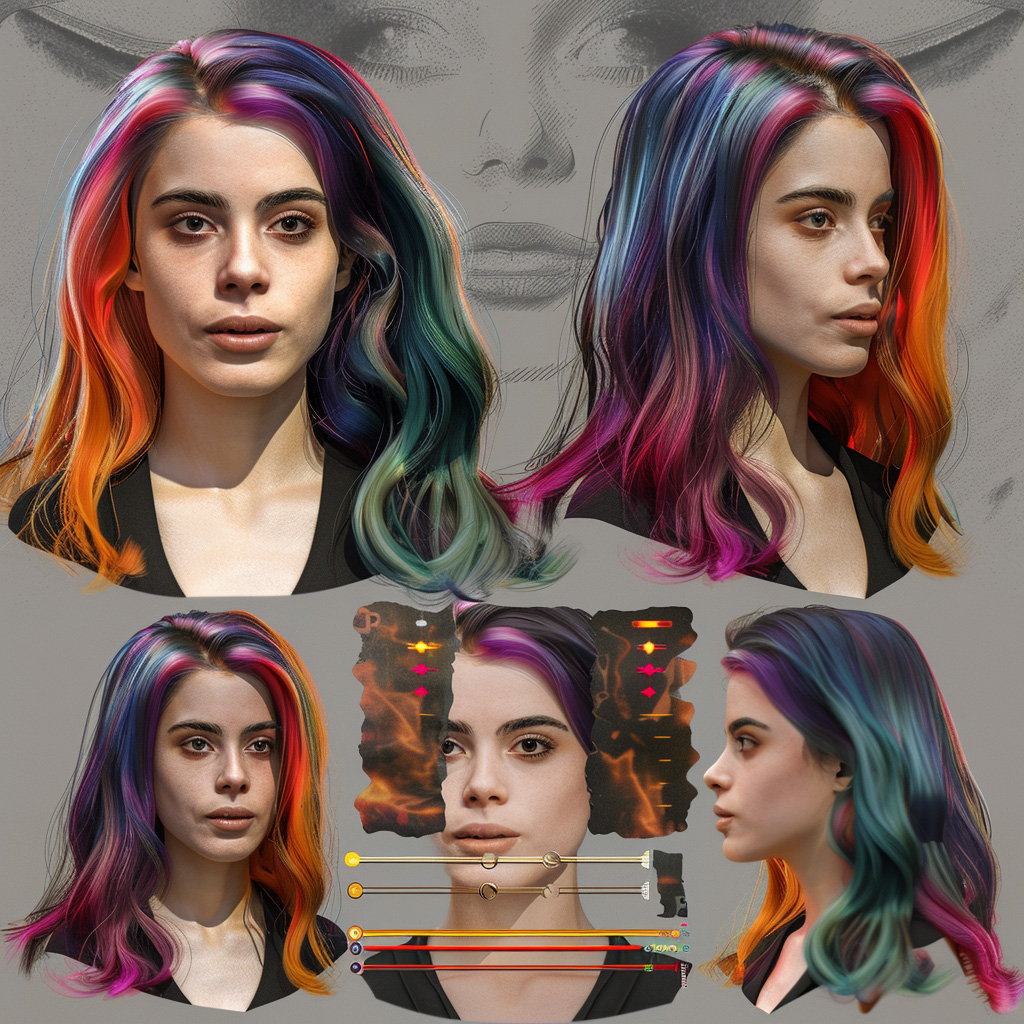

Usually I include a description of the original character in the text component of the prompt to reinforce the elements I want repeated in the new images, but this time I just stripped the text prompt down to almost nothing, leaving only “consistent character sheet, multiple views of the same photoreal character.” And not only do we get those multiple views (albeit in some rather random, occasionally overlapping arrangements), but a few of these are very close to feeling like the same character, that is, until you compare them side by side with the original image, and still, nope, not quite there. How about Rendernet…

As with Hodja, most of the Rendernet results are more full body shots, which I assume is the result of Stable Diffusion putting more weight than Midjourney does on the outfit description in the prompt (I actually did some of these before I tried replicating Rosalia in Midjourney, so I wasn’t yet using the minimal “character sheet” prompt here), and/or experimenting with different aspect ratios (they’re all cropped to 1×1 squares here, but not all of them were originally generated as such). Despite that difference (and the notable variations in body types and apparent ages, and the occasional transposition of her hair colors into her outfit instead), quite a few of these faces are really on point. I might even have to concede that some are good enough to pass the face test and be convincingly deployed (after the usual amount of manual refinement) as new photos of the original character.

Faces are probably always going to present a greater level of difficulty for generative AI than other subjects, just because human beings are so finely tuned into face and expression recognition, starting from their earliest days on earth. But as long as I’m willing to determine which faces are most amenable to this process (at least temporarily stranding those that, like Hodja, just won’t seem to allow themselves to be reproduced accurately, regardless of available methodology), and keep tweaking my prompts and trying slightly different things, chances are I will eventually be able to generate enough images to cherry-pick the kinds of convincing, consistent results that would allow for those select characters’ stories to progress.

Alternately, I could take a sharp left turn and elect to create an entirely new workflow for this purpose, one that would eliminate nearly all dependence on generative AI. I could take a deep dive into something like Unreal Engine, so I could use their impressive Metahuman plugin to create a realistic 3D model of my character, which I could then just pose and include in various scenes, either also 3D, or combine a 2D render of the character model with a 2D background in Photoshop. I’ve already been considering trying this for a while now, but having recently attempted to install Unreal Engine on my beleaguered 2019 MacBook, I’m once again faced with disappointment in my rapidly aging hardware: Unreal did install successfully, and technically, it runs, but “crawls” would be more accurate. It’s not really swift enough to even be able to realistically dive into a tutorial, so I’m blocked on that front, at least for now.

But I still don’t know why some faces remain more problematic than others. Could it be caused by some conflict between the AI engines themselves? If I started on Midjourney with a character reference face that was originally generated by Midjourney, not by DALL-E, would the engine be more likely to successfully reproduce it? Well, let’s see about that…

Tricia

Tricia 53A679’s face sprang into existence via Midjourney (along with 3 other Tricias), although her face was then refined (for the closer close-up) using Stable Diffusion and Photoshop. Okay, let’s roll those digital dice…

Buhh. We’re back to not-quite-close-enough/could-be-her-sisters, but definitely not her. Not that a more positive result would have helped at all with my DALL-E “problem” faces, but still, a worthwhile experiment to have run. While we’re looking at Tricia, what about Rendernet again?

Umm, okay. None of them feel especially faithful to the original face, yet they all pretty much look like the same (new) girl. WTF.

There’s not even any consistency to the inconsistency. Back to the generating board, I guess.